Class action covers countless kids

Discord user led police officers to Grok-generated CSAM of genuine women, claim states.

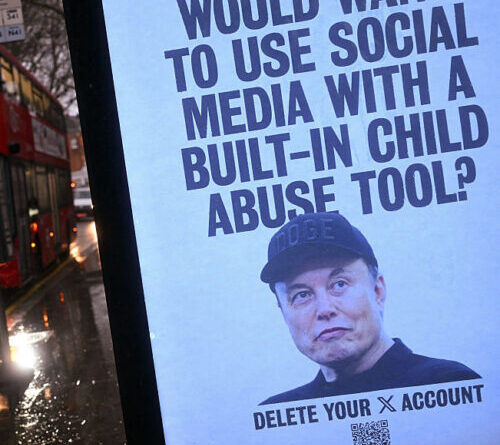

A poster including a picture of United States billionaire and business person Elon Musk, requiring users of his X social networks platform to erase their accounts due to the AI chatbot Grok’s CSAM scandal.

Credit: JUSTIN TALLIS/ Contributor|AFP

A pointer from a confidential Discord user led police officers to discover what might be the very first validated Grok-generated kid sexual assault products (CSAM) that Elon Musk’s xAI can’t quickly dismiss as nonexistent.

As just recently as January, Musk rejected that Grok created any CSAM throughout a scandal in which xAI declined to upgrade filters to obstruct the chatbot from nudifying pictures of genuine individuals.

At the height of the debate, scientists from the Center for Countering Digital Hate approximated that Grok produced roughly 3 million sexualized images, of which about 23,000 images illustrated evident kids. Instead of repair Grok, xAI restricted access to the system to paying customers. That kept the most stunning outputs from distributing on X, however the worst of it was not published there, Wired reported.

Rather, it was created on Grok Imagine. Going into the standalone app, a scientist in January discovered that a little less than 10 percent of about 800 Imagine outputs evaluated appeared to consist of CSAM. In an X post following that discovery, Musk continued turning down the proof and firmly insisted that he was “not knowledgeable about any naked minor images produced by Grok,” highlighting that he ‘d seen “actually absolutely no.”

Musk might now be required to lastly challenge Grok’s CSAM issue after a Discord user reached out to a victim, triggering law enforcement to get included.

In a proposed class-action claim submitted Monday, 3 girls from Tennessee and their guardians implicated Musk of purposefully developing Grok to “benefit off the sexual predation of genuine individuals, consisting of kids.” They approximated that “a minimum of countless minors” were taken advantage of and have actually asked a United States district court for an injunction to lastly end Grok’s damaging outputs. They likewise look for damages, consisting of compensatory damages, for all minors hurt.

A lawyer representing the ladies, Annika K. Martin, validated in a news release that their lives were “shattered by the disastrous loss of personal privacy and the deep sense of infraction that no kid must ever need to experience.”

“These are kids whose school photos and household images were become kid sexual assault product by a billion-dollar business’s AI tool and after that traded amongst predators. Elon Musk and xAI intentionally developed Grok to produce raunchy material for monetary gain, without any regard for the kids and grownups who would be damaged by it,” Martin stated.

The damage is so substantial that, for the women looking for justice, it’s insufficient for Musk to acknowledge just the images that they can reveal Grok twisted into CSAM, Martin stated.

“We mean to hold xAI responsible for each kid they hurt in this method,” Martin stated.

Polices connect Grok to Discord CSAM

For among the girls, the problem began in December, the problem stated. That’s when she got a confidential message on Instagram from a Discord user caution that her specific “pictures” were shared in a folder in addition to lots of other minors. Ultimately the user shared “a series of AI-generated images and videos, which portrayed her” along with 18 other small women, and after that connected her to a Discord server that was developed by the wrongdoer.

Now over 18, the very first victim to get the suggestion was “disrupted,” the problem stated, discovering it tough to identify the sexualized pictures from her real-life material. She instantly understood which pictures the images were based upon, the majority of which were published to her social networks when she was still a small. And troublingly, she acknowledged a few of the other ladies in the folder from her school.

Her very first impulse was to get in touch with the other victims she understood, then “eventually, regional police was called, and a criminal examination was opened,” the grievance stated.

Examining the Discord proof, polices rapidly identified that the criminal had access to the very first victim’s Instagram “due to the fact that he had actually kept a close and friendly relationship” with her. Searching his phone, polices discovered a third-party app that certified or otherwise acquired access to Grok, which they concluded that the wrongdoer utilized to change the ladies’ images.

From there, the bad star submitted the images to a file-sharing platform called Mega and utilized them as a “bartering tool in Telegram group talks with numerous other users,” trading away the AI CSAM submits “for raunchy material of other minors.”

The damages to victims have actually been substantial, the suit stated, pointing out intense psychological and psychological distress. For the victims who understand the wrongdoer, they stay unpredictable if the Grok-generated CSAM was shown schoolmates or dispersed to others at their school, the claim kept in mind. One lady fears the scandal will affect her college admissions, while another feels too frightened to attend her own graduation.

Much more disconcerting than any associates discovering the AI CSAM, nevertheless, is the worry that women will now be stalked due to Grok’s outputs. As the suit describes, “it likewise appears the victims’ real given names and the name of their school was connected to their files online, indicating other online predators might likewise have the ability to determine them, developing a significant threat for stalking.”

xAI presumably hosts Grok CSAM

While it was formerly reported that Grok Imagine’s paying customers were creating more graphic outputs than the Grok outputs that stimulated protest on X, the claim declares that xAI has actually likewise taken other actions to conceal how it benefits from specific material that hurts genuine individuals.

The suit declares that xAI likewise offers licenses and access to its Grok AI design to third-party apps like the one their criminal utilized. That plan allegedly offers xAI an extra revenue source while insulating xAI from exposure that 3rd parties are “utilizing xAI servers and platforms to produce CSAM content asked for by these apps’ consumers,” a news release from their legal group stated.

Presumably, all of the raunchy material created by 3rd parties is hosted on xAI servers, then dispersed by xAI.

“xAI has actually not made Grok’ AI design openly readily available and has actually not certified Grok in its whole however rather certifies making use of its servers to these intermediaries business, understanding that any illegal and illegal material created through triggers to these applications will eventually be developed and dispersed from xAI servers,” the suit stated.

Victims declare that dispute puts xAI directly in offense of kid porn laws:

On details and belief, xAI had the CSAM of Plaintiffs on its servers after Grok produced their CSAM and after that carried and dispersed the illegal contraband to its customer/user, specifically, the criminal, utilizing the cut-out or third-party intermediary application.

They’re hoping the court will lastly explain if xAI understood Grok was creating CSAM and if xAI purposefully processed that material on its servers, then chose to disperse it to increase xAI’s profits. There can be no legitimate reason for stopping working to secure minors if the court concurs with victims that xAI broke kid pornography laws or owed a task of care, the suit declared.

“The gravity of the damage caused by Defendants’ practices significantly surpasses any supposed advantage of Defendants’ ‘spicy mode’ or other uncensored material functions,” the grievance stated. “No genuine service interest is served by creating an AI image-generation tool to produce CSAM.”

xAI did not right away react to Ars’ demand to comment. The business has actually formerly blamed users who produced CSAM for the reaction while threatening to suspend users who abuse Grok.

Ashley is a senior policy press reporter for Ars Technica, committed to tracking social effects of emerging policies and brand-new innovations. She is a Chicago-based reporter with 20 years of experience.

107 Comments

Find out more

As an Amazon Associate I earn from qualifying purchases.