As expert system (AI) designs keep growing and getting more power-hungryscientists are beginning to ask not whether they can be trained– however where. That’s the context behind Google Research’s current proposition to check out space-based AI facilities, a concept that sits someplace in between severe science and orbital overreach.

The concept, called “Project Suncatcher” and laid out in a research study submitted Nov. 22 to the preprint arXiv database, checks out whether future AI work might be worked on constellations of satellites geared up with specialized accelerators and powered mostly by solar power.

The push to look beyond Earth for AI facilities isn’t coming out of no place. Information centers currently take in a non-trivial piece of the world’s power supply: current quotes put worldwide data-center electrical power usage at approximately 415 terawatt-hours in 2024, or about 1.5% of overall international electrical power intake, with forecasts recommending this might more than double by 2030 as AI work rise

Energies in the U.S. are currently preparing for information centers, driven mostly by AI work, to represent in between 6.7-12% of overall electrical power need in some areas by 2028, triggering some executives to alert that there just “isn’t sufficient energy on the grid” to support uncontrolled AI development without considerable brand-new generation capability.

Because context, propositions like space-based information centers begin to check out less like sci-fi extravagance and more like a sign of a market facing the physical limitations of Earth-bound energy and cooling. On paper, space-based information centers seem like a stylish option. In practice, some professionals are skeptical.

Grabbing the starsJoe Morgan, COO of information center facilities company Patmos, is blunt about the near-term potential customers. “What won’t happen in 2026 is the whole ‘data centers in space’ thing,” he informed Live Science. “One of the tech billionaires might actually get close to doing it, but aside from bragging rights, why?”

Get the world’s most remarkable discoveries provided directly to your inbox.

Morgan explains that the market has actually consistently flirted with severe cooling ideas, from mineral-oil immersion to subsea centersjust to desert them when functional truths bite. “There is still hype about building data centers under the ocean, but any thermal benefits are far outweighed by the problem of replacing components,” he stated, keeping in mind that hardware churn is basic to modern-day computing.

That churn is main to the suspicion around orbital AI. GPUs and specialized accelerators diminish rapidly as brand-new architectures provide step-change enhancements every couple of years. In the world, racks can be switched, boards changed and systems updated constantly. In orbit, every repair work needs launches, docking or robotic maintenance– none of which scale quickly or inexpensively.

“Who wants to take a spaceship to update the orbital infrastructure every year or two?” Morgan asks. “What if a vital component breaks? Actually, forget that, what about the latency?”

Latency is not a footnote. Many AI work depend upon firmly combined systems with very quick interconnects, both within information centers and in between them. Google’s proposition leans greatly on laser-based inter-satellite links to simulate those connections, however the physics stays unforgiving. Even at low Earth orbit, round-trip latency to ground stations is inevitable.

“Putting the servers in orbit is a stupid idea, unless your customers are also in orbit,” Morgan stated. Not everybody concurs it must be dismissed so rapidly. Paul Kosteka senior member of IEEE and systems engineer at Air Direct Solutions, stated the interest shows real physical pressures on terrestrial facilities.

“The interest in placing data centers in space has grown as the cost of building centers on earth keeps increasing,” Kostek stated. “There are several advantages to space-based or Moon-based centers. First, access to 24 hours a day of solar power… and second, the ability to cool the centers by radiating excess heat into space versus using water.”

From a simply thermodynamic viewpoint, those arguments are sound. Heat rejection is among the hardest limitations on calculation, and Earth-based information centers are significantly constrained by water schedule, grid capability and regional ecological opposition.

The reaction versus terrestrial AI facilities isn’t restricted to energy and water concerns; health worries are significantly part of the story. In Memphis, locals near xAI’s enormous Colossus information center have voiced issue about air quality and long-lasting breathing effects, with neighborhood members reporting intensified signs and worry of pollution-linked health problems given that the center started running. In other states, challengers of proposed hyperscale information center jobs have framed their resistance around prospective health and ecological damages, arguing that big centers might deteriorate regional air and water quality and intensify existing public health problems.

Putting information centers into orbit would eliminate some restrictions, however change them with others.

Remaining grounded”The technology questions that need to be answered include: Can the current processors used in data centers on Earth survive in space?” Kostek said. “Will the processors have the ability to make it through solar storms or direct exposure to greater radiation on the Moon?”

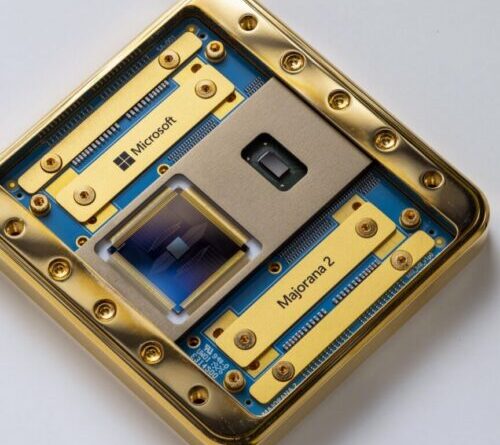

Google researchers have already begun probing some of those questions through early work on Project Suncatcher. The team describes radiation testing of its Tensor Processing Units (TPUs) and modeling of how tightly clustered satellite formations could support the high-bandwidth inter-satellite links needed for distributed computing. Even so, Kostek stresses that the work remains exploratory.

“Preliminary screening is being done to figure out the practicality of space-based information centers,” he said. “While substantial technical obstacles stay and application is still numerous years away, this technique might ultimately provide a reliable method to accomplish growth.”

That word — expansion — may be the real clue. For some researchers, the most compelling rationale for off-world computing has little to do with serving Earth-based users at all. Christophe Bosquillon, co-chair of the Moon Village Association’s working group for Disruptive Technology & Lunar Governance, argues that space-based data centers make more sense as infrastructure for space itself.

“With humankind on track to quickly develop an irreversible lunar existence, a facilities foundation for a future data-driven lunar market and the cis-lunar economy is required,” he told Live Science.

From this perspective, space-based data centers aren’t substitutes for Earth’s infrastructure so much as tools for enabling space activity, handling everything from lunar sensor data to autonomous systems and navigation.

“Economical energy is a crucial concern for all activities and will consist of a nuclear part beside solar energy and ranges of fuel cells and batteries,” Bosquillon said, adding that the challenges extend well beyond engineering to governance, law and international coordination.

Crucially, space-based computing could offload non-latency-sensitive workloads from Earth altogether. “Resolving the energy issue in area and taking that problem off the Earth to process Earth-related non-latency-sensitive information … has benefit,” Bosquillon said, even extending to the idea of space and the Moon as a secure vault for “civilisational” information.

Seen in this manner, Google’s proposition looks less like a service to today’s information center scarcities and more like a probe into the long-lasting physics of calculation. As AI methods planetary-scale energy intake, the concern might not be whether Earth has enough capability, however whether scientists can manage to overlook environments where energy is plentiful however whatever else is tough.

In the meantime, space-based AI stays strictly speculative. Whether it ever leaves Earth’s gravity might depend less on photovoltaic panels and lasers than on how desperate the energy race ends up being.

Carly Page is an innovation reporter and copywriter with more than a years of experience covering cybersecurity, emerging tech, and digital policy. She formerly worked as the senior cybersecurity press reporter at TechCrunch.

Now a freelancer, she composes news, analysis, interviews, and long-form functions for publications consisting of Forbes, IT Pro, LeadDev, Resilience Media, The Register, TechCrunch, TechFinitive, TechRadar, TES, The Telegraph, TIME, Uswitch, WIRED, and others. Carly likewise produces copywriting and editorial work for innovation business and occasions.

You should validate your show and tell name before commenting

Please logout and after that login once again, you will then be triggered to enter your screen name.

Learn more

As an Amazon Associate I earn from qualifying purchases.