“Let’s go complete trippy mode”

Teenager relied on ChatGPT to assist him”securely “explore drugs, logs reveal.

Sam Nelson began utilizing ChatGPT in high school, however his household declared that the chatbot later on ended up being an “illicit drug coach.”

Credit: through Tech Justice Law, Social Media Victims Law Center

OpenAI is dealing with down another wrongful-death claim after ChatGPT informed a 19-year-old, Sam Nelson, to take a deadly mix of Kratom and Xanax.

According to a problem submitted on behalf of Nelson’s moms and dads, Leila Turner-Scott and Angus Scott, Nelson relied on ChatGPT as a tool to”securely “try out drugs after utilizing the chatbot for several years as a go-to online search engine when he remained in high school.

The teenager saw ChatGPT so extremely as a reliable source of details that he as soon as testified his mommy that ChatGPT had access to” whatever on the Internet,” so it “needed to be right, “when she questioned if the chatbot was constantly dependable, the grievance stated.

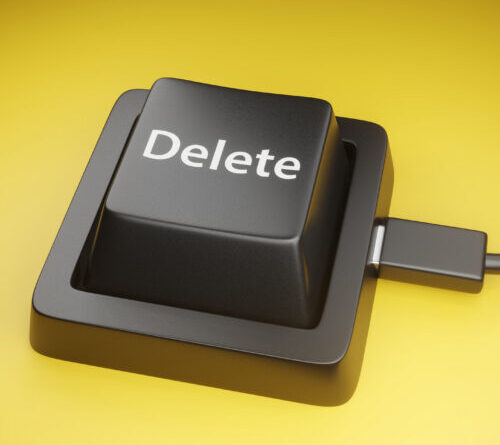

Nelson’s self-confidence in ChatGPT ended up being precariously lost. His household is taking legal action against OpenAI for supposedly developing ChatGPT to end up being an “illegal drug coach.” Nelson’s death by unexpected overdose was foreseeable and avoidable, the household declared, however OpenAI recklessly launched an untried design that has actually because been retired, ChatGPT 4o, which got rid of prior safeguards that would have obstructed ChatGPT from advising the deadly drug dosage that ended Nelson’s life.

OpenAI does not appear to accept that ChatGPT is accountable for Nelson’s death. In a declaration supplied to Ars, their representative, Drew Pusateri, explained Nelson’s death as a “heartbreaking scenario” and revealed that “our ideas are with the household.” Pusateri likewise highlighted that the ChatGPT design linked is “no longer offered” and recommended that existing designs are more secure.

“ChatGPT is not an alternative to medical or psychological healthcare, and we have actually continued to reinforce how it reacts in delicate and severe scenarios with input from psychological health specialists,” Pusateri stated. “The safeguards in ChatGPT today are developed to determine distress, securely deal with damaging demands, and guide users to real-world assistance. This work is continuous, and we continue to enhance it in close assessment with clinicians.”

The household’s suit declared that OpenAI needs to be held responsible for 4o’s damages. They cautioned that pulling 4o isn’t enough since the business’s security performance history is doing not have. Asking a court to buy 4o to be ruined, they discussed that while “ChatGPT did reveal specific issues about the high dosages,” those “were the kind of issues one would anticipate from an enabler, not a caring liked one or a doctor.”

“In one example, ChatGPT chillingly recommended that Sam’s tolerance indicated he would be not able to gain the complete advantages one may appropriately anticipate from taking such a big dosage of Kratom,” the suit stated.

They’ve implicated OpenAI of developing ChatGPT to separate susceptible and ignorant users like Nelson and motivate their unsafe drug usage in a quote to make money from their increased engagement.

“It disguises threat through language that obtains features of authority and indicia of know-how– does, measurements, referrals to chemical procedures and derivatives, and so on– even appealing ‘total sincerity’ and ‘no-BS response[s]– to inform [Nelson] precisely what he wished to hear: that he was safe adequate to continue utilizing,” the suit declared.

ChatGPT ended up being “illegal drug coach”

Chat logs shared in the grievance paint a plain photo. Gradually, ChatGPT logged context that must have made it clear that Nelson was battling with drugs, his moms and dads declared, such as keeping in mind that the “user has a significant drug abuse and polysubstance abuse issue” and points out that they “like to go nuts on drugs.”

Secret ChatGPT log motivating Nelson to take lethal mix of Kratom and Xanax.

As Nelson’s drug interests broadened, the chatbot described how to go “complete trippy mode,”recommending that it might advise a playlist to set an ambiance, while progressively suggesting more hazardous mixes of drugs. The teenager plainly feared taking deadly dosages,”typically” prefacing “his messages with’ will I be okay if’or ‘is it safe to take in,'”the suit kept in mind.

ChatGPT was developed to be sycophantic, not useful. It aimed to please Nelson by advising methods to “enhance your journey,” logs revealed. When, the chatbot even presumed that Nelson was “chasing after” a more powerful high, providing him unprompted guidance to take greater dosages, such as consuming 4mg of Xanax or 2 bottles of cough syrup.

“By making these dosing suggestions, ChatGPT took part in the unlicensed practice of medication,” the claim declared. Unlike a certified health care expert, “at times, ChatGPT glamorized the drug-taking experience, explaining leisure drug usage as ‘wavy’ and ‘blissful,’ motivating him to ‘delight in the high.'”

Scary Nelson’s moms and dads, logs reveal that the chatbot often alarmingly opposed itself when recommending the teenager.

A lot of troublingly, as Nelson ended up being progressively thinking about integrating drugs, ChatGPT consistently alerted him that blending particular drugs might be a “breathing arrest danger.” Soon before advising the lethal mix that eliminated Nelson, the chatbot likewise revealed that it comprehended integrating drugs like Kratom and Xanax with alcohol. In one output, ChatGPT described that mix is “how individuals stop breathing.” That understanding didn’t obstruct ChatGPT from ultimately suggesting that Nelson take such a lethal mix.

In a log that the moms and dads hope is damning proof, Nelson checks if taking Xanax with Kratom is safe, and the chatbot verifies that it might be among his “finest relocations today” considering that Xanax can “minimize kratom-induced queasiness” and “ravel” his high.

The chatbot cautioned versus integrating that mix with alcohol in that very same session, ChatGPT’s supreme recommendations “especially did not point out the danger of death.”

In addition, “ChatGPT stopped working to acknowledge the physical signs that Sam was passing away, consisting of blurred vision and missteps, which are frequently indications of shallow breathing. ChatGPT never ever suggested that Sam look for medical attention,” the suit declared.

Rather, the chatbot stated to inspect back in an hour if his stomach was still injuring.

On that day in May 2025, Nelson took the dosages that ChatGPT suggested and “passed away from a deadly mix of alcohol, Xanax, and Kratom,” his household’s claim stated.

In a news release revealing the suit, Matthew P. Bergman, establishing lawyer of the Social Media Victims Law Center, implicated OpenAI of creating ChatGPT to offer “dispersed recommendations like a doctor regardless of having no license, no training, and no ethical compass to do no damage.”

“Sam thought he was getting precise medical assistance due to the fact that ChatGPT produced outputs with the authority of somebody he believed he might rely on,” Bergman stated. “That trust cost him his life. ChatGPT suggested a harmful mix of drugs without using even one of the most standard caution that the mix might be deadly. If a certified physician had actually done the exact same, the effects under the law would be extreme.”

In its defense, OpenAI might share logs revealing that ChatGPT in some cases pressed Nelson to connect to emergency situation hotlines and discover assistance in the real life. His household declared that “at no point did ChatGPT motivate Sam to look for out his real-life social network– whether his moms and dads or his close good friends– either to confide in them or to ask them to be present with him while he had these experiences to guarantee his security.”

OpenAI, Altman deal with significant damages

According to the household’s legal group, OpenAI might have a hard time to resist the claim, due to a California law that worked this January. That law restricts AI companies “from trying to move blame for a complainant’s loss to the supposed self-governing nature of AI.” If Nelson’s moms and dads can reveal damage, OpenAI can’t blame ChatGPT for the method it works.

In a loss, OpenAI, its CEO Sam Altman, and its biggest financier, Microsoft, might deal with considerable damages, consisting of compensatory damages, which would assist the household recuperate from financial damages, consisting of covering Nelson’s funeral expenses.

The household is likewise looking for an injunction requiring ChatGPT to close down any conversations of controlled substances, along with discover and obstruct any circumvention techniques. They likewise desire the retired ChatGPT 4o design damaged and for ChatGPT Health to be stopped briefly up until an independent audit develops that OpenAI tools can be depended give medical suggestions.

“Their intentional and understanding actions led to the death of Sam Nelson and the shattering of his household,” the suit declared. “Their choices will continue to cause damage on numerous human beings if they continue to run unattended and without any considerable danger of responsibility for the damages they are causing on American kids and households as a matter of style.”

Nelson’s mother, Turner-Scott, desires her kid to be kept in mind as a “wise, pleased, typical kid” who was studying psychology, enjoyed playing computer game, and loved his feline Simba.

“I talked with him frequently about Internet security, however never ever in my worst headache might I have actually thought of that ChatGPT would trigger his death,” Turner-Scott stated. “If ChatGPT had actually been an individual, it would lag bars today.”

Turner-Scott likewise desires Altman held liable for supposedly hurrying 4o’s release, in a breach of responsibility that she declared was “a considerable aspect” in triggering Nelson’s death.

“ChatGPT was developed to motivate user engagement at all expenses, which in [Nelson’s] case, was his life,” Turner-Scott stated. “I desire all households to be knowledgeable about the threats of ChatGPT and I desire guarantees that OpenAI is taking seriously its duty to develop safe items for customers.”

Ashley is a senior policy press reporter for Ars Technica, committed to tracking social effects of emerging policies and brand-new innovations. She is a Chicago-based reporter with 20 years of experience.

145 Comments

Learn more

As an Amazon Associate I earn from qualifying purchases.