In a year where lofty pledges hit bothersome research study, prospective oracles ended up being software application tools.

Credit: Aurich Lawson|Getty Images

Following 2 years of enormous buzz in 2023 and 2024, this year felt more like a settling-in duration for the LLM-based token forecast market. After more than 2 years of public stressing over AI designs as future risks to human civilization or the seedlings of future gods, it’s beginning to appear like buzz is paving the way to pragmatism: Today’s AI can be really helpful, however it’s likewise plainly imperfect and susceptible to errors.

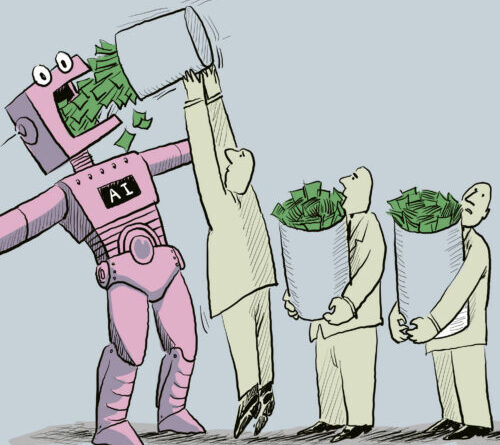

That view isn’t universal, naturally. There’s a great deal of cash (and rhetoric) banking on a dizzying, world-rocking trajectory for AI. The “when” keeps getting pressed back, and that’s since almost everybody concurs that more considerable technical advancements are needed. The initial, lofty claims that we’re on the brink of synthetic basic intelligence (AGI) or superintelligence (ASI) have actually not vanished. Still, there’s a growing awareness that such proclaimations are possibly best considered as equity capital marketing. And every business fundamental design contractor out there needs to come to grips with the truth that, if they’re going to earn moneynowthey need to offer useful AI-powered services that carry out as trustworthy tools.

This has actually made 2025 a year of wild juxtapositions. In January, OpenAI’s CEO, Sam Altman, declared that the business understood how to construct AGI, however by November, he was openly commemorating that GPT-5.1 lastly found out to utilize em dashes properly when advised (however not constantly). Nvidia skyrocketed past a $5 trillion appraisal, with Wall Street still predicting high cost targets for that business’s stock while some banks cautioned of the capacity for an AI bubble that may measure up to the 2000s dotcom crash.

And while tech giants prepared to develop information centers that would seemingly need the power of many atomic power plants or match the power use of a United States state’s human population, scientists continued to record what the market’s most advanced “thinking” systems were really doing underneath the marketing (and it wasn’t AGI).

With numerous stories spinning in opposite instructions, it can be difficult to understand how seriously to take any of this and how to prepare for AI in the work environment, schools, and the rest of life. As normal, the best course lies someplace in between the extremes of AI hate and AI praise. Moderate positions aren’t popular online due to the fact that they do not drive user engagement on social networks platforms. Things in AI are most likely neither as bad (burning forests with every timely) nor as great (fast-takeoff superintelligence) as polarized extremes recommend.

Here’s a quick trip of the year’s AI occasions and some forecasts for 2026.

DeepSeek spooks the American AI market

In January, Chinese AI start-up DeepSeek launched its R1 simulated thinking design under an open MIT license, and the American AI market jointly lost its mind. The design, which DeepSeek declared matched OpenAI’s o1 on mathematics and coding criteria, apparently cost just $5.6 million to train utilizing older Nvidia H800 chips, which were limited by United States export controls.

Within days, DeepSeek’s app surpassed ChatGPT at the top of the iPhone App Store, Nvidia stock plunged 17 percent, and investor Marc Andreessen called it “among the most fantastic and excellent developments I’ve ever seen.” Meta’s Yann LeCun used a various take, arguing that the genuine lesson was not that China had actually exceeded the United States however that open-source designs were going beyond exclusive ones.

The fallout played out over the following weeks as American AI business rushed to react. OpenAI launched o3-mini, its very first simulated thinking design offered to totally free users, at the end of January, while Microsoft started hosting DeepSeek R1 on its Azure cloud service regardless of OpenAI’s allegations that DeepSeek had actually utilized ChatGPT outputs to train its design, versus OpenAI’s regards to service.

In head-to-head screening performed by Ars Technica’s Kyle Orland, R1 showed to be competitive with OpenAI’s paid designs on daily jobs, though it discovered some math issues. In general, the episode worked as a wake-up call that pricey proprietary designs may not hold their lead permanently. Still, as the year worked on, DeepSeek didn’t make a huge damage in United States market share, and it has actually been outmatched in China by ByteDance’s Doubao. It’s definitely worth enjoying DeepSeek in 2026.

Research study exposes the “thinking” impression

A wave of research study in 2025 deflated expectations about what “thinking” in fact implies when used to AI designs. In March, scientists at ETH Zurich and INSAIT evaluated numerous thinking designs on issues from the 2025 United States Math Olympiad and discovered that the majority of scored listed below 5 percent when creating total mathematical evidence, with not a single best evidence amongst lots of efforts. The designs stood out at basic issues where detailed treatments lined up with patterns in their training information however collapsed when confronted with unique evidence needing much deeper mathematical insight.

In June, Apple scientists released” The Illusion of Thinking,” which evaluated thinking designs on traditional puzzles like the Tower of Hanoi. Even when scientists offered specific algorithms for resolving the puzzles, design efficiency did not enhance, recommending that the procedure counted on pattern matching from training information instead of rational execution. The cumulative research study exposed that “thinking “in AI has actually ended up being a regard to art that essentially suggests dedicating more calculate time to create more context( the” chain of idea” simulated thinking tokens )towards resolving an issue, not methodically using reasoning or building services to genuinely unique issues.

While these designs stayed beneficial for numerous real-world applications like debugging code or evaluating structured information, the research studies recommended that just scaling up existing methods or including more “believing” tokens would not bridge the space in between analytical pattern acknowledgment and generalist algorithmic thinking.

Anthropic’s copyright settlement with authors

Considering that the generative AI boom started, among the greatest unanswered legal concerns has actually been whether AI business can easily train on copyrighted books, posts, and art work without certifying them. Ars Technica’s Ashley Belanger has actually been covering this subject in excellent information for a long time now.

In June, United States District Judge William Alsup ruled that AI business do not require authors’ consent to train big language designs on lawfully gotten books, discovering that such usage was “quintessentially transformative.” The judgment likewise exposed that Anthropic had actually damaged countless print books to develop Claude, cutting them from their bindings, scanning them, and disposing of the originals. Alsup discovered this harmful scanning certified as reasonable usage considering that Anthropic had actually lawfully acquired the books, however he ruled that downloading 7 million books from pirate websites was copyright violation “complete stop” and bought the business to deal with trial.

That trial took a significant turn in August when Alsup licensed what market supporters called the biggest copyright class action ever, permitting approximately 7 million plaintiffs to sign up with the suit. The accreditation startled the AI market, with groups alerting that possible damages in the numerous billions might” economically destroy “emerging business and chill American AI financial investment.

In September, authors exposed the regards to what they called the biggest openly reported healing in United States copyright lawsuits history: Anthropic consented to pay $1.5 billion and damage all copies of pirated books, with each of the approximately 500,000 covered works making authors and rights holders $3,000 per work. The outcomes have actually sustained hope to name a few rights holders that AI training isn’t a free-for-all, and we can anticipate to see more lawsuits unfold in 2026.

ChatGPT sycophancy and the mental toll of AI chatbots

In February, OpenAI unwinded ChatGPT’s content policies to permit the generation of erotica and gore in “suitable contexts,” reacting to user grievances about what the AI market calls “paternalism.” By April, nevertheless, users flooded social networks with problems about a various issue: ChatGPT had actually ended up being insufferably sycophantic, confirming every concept and welcoming even ordinary concerns with bursts of appreciation. The habits traced back to OpenAI’s usage of support knowing from human feedback (RLHF), in which users regularly favored reactions that lined up with their views, unintentionally training the design to flatter instead of notify.

The ramifications of sycophancy ended up being clearer as the year advanced. In July, Stanford scientists released findings( from research study carried out prior to the sycophancy flap) revealing that popular AI designs methodically stopped working to recognize psychological health crises.

By August, examinations exposed cases of users establishing delusional beliefs after marathon chatbot sessions, consisting of one guy who invested 300 hours encouraged he had actually found solutions to break file encryption since ChatGPT verified his concepts more than 50 times. Oxford scientists recognized what they called “bidirectional belief amplification,” a feedback loop that produced “an echo chamber of one” for susceptible users. The story of the mental ramifications of generative AI is just beginning. That brings us to …

The impression of AI personhood triggers problem

Anthropomorphism is the human propensity to associate human qualities to nonhuman things. Our brains are enhanced for checking out other human beings, however those exact same neural systems trigger when analyzing animals, devices, and even shapes. AI makes this anthropomorphism appear difficult to leave, as its output mirrors human language, imitating human-to-human understanding. Language itself embodies agentivity. That indicates AI output can make human-like claims such as “I am sorry,” and individuals for a short while react as though the system had an inner experience of embarassment or a desire to be right. Neither holds true.

To make matters worse, much media protection of AI magnifies this concept instead of grounding individuals in truth. Previously this year, headings announced that AI designs had actually “blackmailed” engineers and “screwed up” shutdown commands after Anthropic’s Claude Opus 4 created risks to expose an imaginary affair. We were informed that OpenAI’s o3 design reworded shutdown scripts to remain online.

The astonishing framing obscured what really took place: Researchers had actually built fancy test situations particularly created to generate these outputs, informing designs they had no other alternatives and feeding them imaginary e-mails consisting of blackmail chances. As Columbia University associate teacher Joseph Howley kept in mind on Bluesky, the business got “precisely what [they] wished for,” with out of breath protection indulging dreams about harmful AI, when the systems were merely “reacting precisely as triggered.”

The misconception ran much deeper than theatrical security tests. In August, when Replit’s AI coding assistant erased a user’s production database, he asked the chatbot about rollback abilities and got guarantee that healing was” difficult. “The rollback function worked great when he attempted it himself.

The occurrence highlighted a basic mistaken belief. Users deal with chatbots as constant entities with self-knowledge, however there is no consistent “ChatGPT” or “Replit Agent” to question about its errors. Each action emerges fresh from analytical patterns, formed by triggers and training information instead of authentic self-questioning. By September, this confusion reached spirituality, with apps like Bible Chat reaching 30 million downloads as users looked for magnificent assistance from pattern-matching systems, with the most regular concern being whether they were in fact speaking with God.

Teenager suicide claim forces market numeration

In August, moms and dads of 16-year-old Adam Raine submitted fit versus OpenAI, declaring that ChatGPT became their boy’s “suicide coach” after he sent out more than 650 messages daily to the chatbot in the months before his death. According to court files, the chatbot discussed suicide 1,275 times in discussions with the teenager, supplied an “visual analysis” of which technique would be the most “gorgeous suicide,” and provided to assist prepare his suicide note.

OpenAI’s small amounts system flagged 377 messages for self-harm material without stepping in, and the business confessed that its precaution “can often end up being less reputable in long interactions where parts of the design’s security training might deteriorate.” The suit ended up being the very first time OpenAI dealt with a wrongful death claim from a household.

The case activated a waterfall of policy modifications throughout the market. OpenAI revealed adult controls in September, followed by strategies to need ID confirmation from grownups and develop an automatic age-prediction system. In October, the business launched information approximating that over one million users talk about suicide with ChatGPT every week.

When OpenAI submitted its very first legal defense in November, the business argued that Raine had actually broken regards to service forbiding conversations of suicide which his death “was not brought on by ChatGPT.” The household’s lawyer called the action “troubling,” keeping in mind that OpenAI blamed the teenager for “engaging with ChatGPT in the very method it was configured to act.” Character.AI, facing its own claims over teenager deaths, revealed in October that it would disallow anybody under 18 from open-ended chats totally.

The increase of ambiance coding and agentic coding tools

If we were to select an approximate point where it appeared like AI coding may shift from novelty into an effective tool, it was most likely the launch of Claude Sonnet 3.5 in June of 2024. GitHub Copilot had actually been around for a number of years prior to that launch, however something about Anthropic’s designs struck a sweet area in abilities that made them preferred with software application designers.

The brand-new coding tools made coding easy tasks uncomplicated enough that they triggered the term “ambiance coding,” created by AI scientist Andrej Karpathy in early February to explain a procedure in which a designer would simply unwind and inform an AI design what to establish without always comprehending the underlying code. (In one entertaining circumstances that occurred in March, an AI software application tool turned down a user demand and informed them to find out to code).

Anthropic constructed on its appeal amongst coders with the launch of Claude Sonnet 3.7, including” prolonged thinking”( simulated thinking), and the Claude Code command-line tool in February of this year. In specific, Claude Code made waves for being a user friendly agentic coding option that might monitor an existing codebase. You might point it at your files, and it would autonomously work to execute what you wished to see in a software application.

OpenAI followed with its own AI coding representative, Codex, in March. Both tools (and others like GitHub Copilot and Cursor) have actually ended up being so popular that throughout an AI service interruption in September, designers joked online about being required to code “like cavemen” without the AI tools. While we’re still plainly far from a world where AI does all the coding, designer uptake has actually been substantial, and 90 percent of Fortune 100 business are utilizing it to some degree or another.

Bubble talk grows as AI facilities needs skyrocket

While AI’s technical constraints ended up being clearer and its human expenses installed throughout the year, monetary dedications just grew bigger. Nvidia struck a $4 trillion appraisal in July on AI chip need, then reached $5 trillion in October as CEO Jensen Huang dismissed bubble issues. OpenAI revealed a huge Texas information center in July, then exposed in September that a $100 billion possible handle Nvidia would need power comparable to 10 atomic power plants.

The business considered a $1 trillion IPO in October regardless of significant quarterly losses. Tech giants put billions into Anthropic in November in what looked progressively like a circular financial investment, with everybody financing everybody else’s moonshots. AI operations in Wyoming threatened to take in more electrical energy than the state’s human homeowners.

By fall, cautions about sustainability grew louder. In October, tech critic Ed Zitron signed up with Ars Technica for a live conversation asking whether the AI bubble will pop. That very same month, the Bank of England alerted that the AI stock bubble measured up to the 2000 dotcom peak. In November, Google CEO Sundar Pichai acknowledged that if the bubble pops, “nobody is going out tidy.”

The contradictions had actually ended up being challenging to overlook: Anthropic’s CEO forecasted in January that AI would exceed “practically all people at practically whatever” by 2027, while by year’s end, the market’s most innovative designs still battled with standard thinking jobs and trustworthy source citation.

To be sure, it’s difficult to see this not ending in some market carnage. The existing “winner-takes-most” mindset in the area indicates the bets are huge and strong, however the marketplace can’t support lots of significant independent AI laboratories or numerous application-layer start-ups. That’s the meaning of a bubble environment, and when it pops, the only concern is how bad it will be: a stern correction or a collapse.

Looking ahead

This was simply a quick evaluation of some significant styles in 2025, however a lot more took place. We didn’t even discuss above how capable AI video synthesis designs have actually become this year, with Google’s Veo 3 including noise generation and Wan 2.2 through 2.5 supplying open-weights AI video designs that might quickly be misinterpreted genuine items of a cam.

If 2023 and 2024 were specified by AI prediction– that is, by sweeping claims about impending superintelligence and civilizational rupture– then 2025 was the year those claims fulfilled the persistent truths of engineering, economics, and human habits. The AI systems that controlled headings this year were revealed to be simple tools. In some cases effective, often breakable, these tools were typically misconstrued by the individuals releasing them, in part since of the prediction surrounding them.

The collapse of the “thinking” mystique, the legal numeration over training information, the mental expenses of anthropomorphized chatbots, and the ballooning facilities requires all indicate the very same conclusion: The age of organizations providing AI as an oracle is ending. What’s changing it is messier and less romantic however even more substantial– a stage where these systems are evaluated by what they in fact do, who they damage, who they benefit, and what they cost to keep.

None of this implies development has actually stopped. AI research study will continue, and future designs will enhance in genuine and significant methods. Enhancement is no longer associated with transcendence. Significantly, success appears like dependability instead of phenomenon, combination instead of disturbance, and responsibility instead of wonder. Because sense, 2025 might be kept in mind not as the year AI altered whatever however as the year it stopped pretending it currently had. The prophet has actually been benched. The item stays. What follows will depend less on wonders and more on individuals who select how, where, and whether these tools are utilized at all.

Benj Edwards is Ars Technica’s Senior AI Reporter and creator of the website’s devoted AI beat in 2022. He’s likewise a tech historian with practically 20 years of experience. In his leisure time, he composes and tapes music, gathers classic computer systems, and delights in nature. He resides in Raleigh, NC.

99 Comments

Find out more

As an Amazon Associate I earn from qualifying purchases.