Even if you do not understand much about the inner functions of generative AI designs, you most likely understand they require a great deal of memory. It is presently nearly difficult to purchase a meager stick of RAM without getting fleeced. Google Research just recently exposed TurboQuant, a compression algorithm that decreases the memory footprint of big language designs (LLMs) while likewise increasing speed and preserving precision.

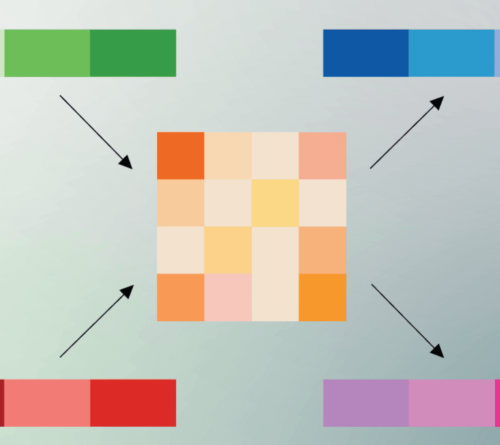

TurboQuant is focused on decreasing the size of the key-value cache, which Google likens to a “digital cheat sheet” that shops essential details so it does not need to be recomputed. This cheat sheet is needed due to the fact that, as we state all the time, LLMs do not really understand anything; they can do an excellent impression of understanding things through using vectors, which map the semantic significance of tokenized text. When 2 vectors are comparable, that indicates they have conceptual resemblance.

High-dimensional vectors, which can have hundreds or countless embeddings, might explain complicated info like the pixels in an image or a big information set. They likewise inhabit a great deal of memory and pump up the size of the key-value cache, bottlenecking efficiency. To make designs smaller sized and more effective, designers utilize quantization strategies to run them at lower accuracy. The downside is that the outputs become worse– the quality of token evaluation decreases. With TurboQuant, Google’s early outcomes reveal an 8x efficiency boost and 6x decrease in memory use in some tests without a loss of quality.

Angles and mistakes

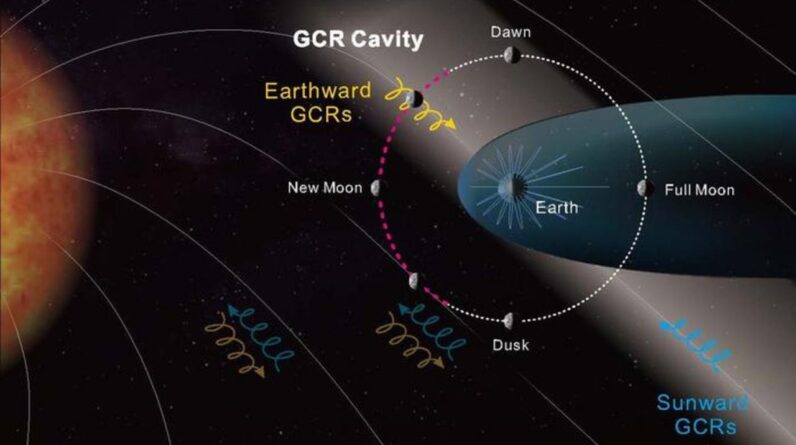

Using TurboQuant to an AI design is a two-step procedure. To attain top quality compression, Google has actually created a system called PolarQuant. Typically, vectors in AI designs are encoded utilizing basic XYZ collaborates, however PolarQuant transforms vectors into polar collaborates in a Cartesian system. On this circular grid, the vectors are decreased to 2 pieces of details: a radius (core information strength) and an instructions (the information’s significance).

Find out more

As an Amazon Associate I earn from qualifying purchases.