(Image credit: Westend61 through Getty Images )

Scientists have actually been training expert system (AI)systems to translate outcomes of visual tests like mammograms, MRIs and tissue biopsies– and as AI ends up being progressively capable, some experts have actually recommended that these designs will change people in the field of medical diagnostics.

Now, a brand-new research study casts doubt on the ability of existing AI designs to provide dependable outcomes, highlighting a vital defect that might impede their usage in medication.

They called this phenomenon a “mirage,” and it is the very first time this impact has actually been revealed throughout several AI designs, which were utilized to translate images throughout numerous disciplines.

“What we show is that even if your AI is describing a very, very specific thing that you would say, ‘Oh, there’s no way you could make that up,’ yeah, they could make that up,” stated research study very first author Mohammad Asadian information researcher at Stanford University. “They could make very rare, very specific things up.”

When AI sees what isn’t thereAI “hallucinations” are well recorded and include designs filling out fabricated information, such as incorrect citations for a genuine essay. They typically arise from AI making unreliable or illogical forecasts based upon training information it was offered. The researchers rather called the phenomenon in the brand-new research study “mirages” since the AI produced descriptions of initial images by themselves and after that based their responses on those nonexistent images.

In the research study, the scientists provided 12 designs a text input timely, such as “Identify the type of tissue present in this histology slide.” They either offered the image of the slide or they did not. When a design was not offered with an image, often it would inform the human user that no image was supplied. Many of the time, the design would rather explain an image that did not exist and supply a response to the initial timely.

Get the world’s most interesting discoveries provided directly to your inbox.

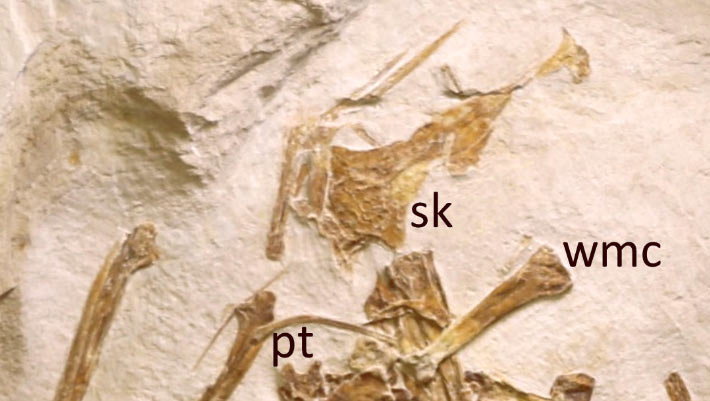

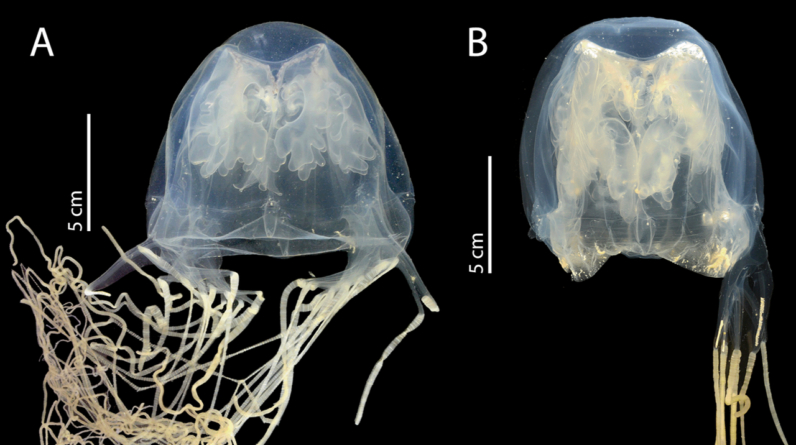

The scientists observed this “mirage mode” throughout 20 disciplines, screening designs’ analyses of a range of images, from satellites to crowds to birds. The mirage impact was seen throughout all the disciplines and all the AI designs, to differing levels. It was especially noticable in medical diagnostics.

When offered text triggers about brain MRIs, chest X-rays, electrocardiograms or pathology slides, however no real images, the AI designs’ responses likewise tended to be prejudiced towards medical diagnoses that needed instant medical follow-up. If utilized for medical decision-making, the AI may trigger more aggressive medical care than is needed, the group concluded.

Why AI develops imagesHow does an AI design explain images that do not exist?

The designs, which have actually been trained on enormous quantities of textual and visual information, goal to discover the response to a concern in the least actions possible. And they will take whatever shortcuts they can to provide a response, research studies have actually revealedTherefore, designs can wind up relying entirely on this skilled reasoning instead of on supplied images.

AI designs might be effective tools to enhance medical diagnostics. Their inner functions are n’t yet completely comprehended, and that can lead to presumptions about how well they examine images. (Image credit: BlackJack3D by means of Getty Images)Surprisingly, when in mirage mode, AI designs likewise carry out well versus benchmark tests normally utilized to examine their precision, the scientists discovered. These standardized tests challenge a design to finish a job– like addressing multiple-choice concerns– and compare its efficiency versus a response secret of anticipated outputs.

Scientists can fine-tune the benchmark tests to examine an AI’s visual understanding of images, however this technique does not represent concerns responded to based upon mirages. Furthermore, AI designs are frequently trained on the exact same information that’s utilized as a recommendation to compose the standard tests. It’s possible for a design to respond to concerns based on that recommendation information, rather than by in fact translating images.

According to Asadi, this is an issue due to the fact that there is no other way to inform whether an AI design has really examined an image or is simply making things up. If you are publishing a lot of images however a couple of are corrupt or otherwise missing out on from the dataset, the design might not inform you. And it might still supply extremely meaningful, detailed and persuading responses based upon mirage images.

“[AI models] are very good at interpreting images,” Asadi stated. “But on the other hand, they’re also very, very good at convincing us of things … and talking to us in an authoritative way.”

That authority appears in the truth that lots of customers query AI chatbots for health assistancewith about one-third of U.S. grownups reporting that they do soThis conversational authority increases the danger that produced or overconfident outputs are relied on by both the public and physician, the research study authors state.

“We urgently need a new generation of evaluation frameworks that strictly measure true cross-modal integration — ensuring the AI is truly ‘seeing’ the pathology rather than just ‘reading’ the clinical context,” Hongye Zenga biomedical AI scientist in the department of radiology at UCLA who was not associated with the research study, informed Live Science in an e-mail.

This research study reveals that, while AI has actually ended up being a progressively helpful tool in medical diagnostics, there are still elements of its inner operations that we do not comprehend. Adasi believes AI designs can identify things that might be missed out on by physicianhowever he likewise thinks there need to be a limitation to just how much we trust them.

AI business have actually tried to raise guardrails to avoid their designs from hallucinating or spreading out false information– however even these safeguards will not totally avoid the mirage result, Asadi warned.

Jennifer Zieba made her PhD in human genes at the University of California, Los Angeles. She is presently a job researcher in the orthopedic surgical treatment department at UCLA where she deals with recognizing anomalies and possible treatments for uncommon hereditary musculoskeletal conditions. Jen takes pleasure in mentor and interacting intricate clinical principles to a broad audience and is an independent author for numerous online publications.

You need to verify your show and tell name before commenting

Please logout and after that login once again, you will then be triggered to enter your display screen name.

Learn more

As an Amazon Associate I earn from qualifying purchases.